Can machine learning be used to reveal the secrets of the quark-gluon plasma?

Yes, it can. However, only with advanced new methods.

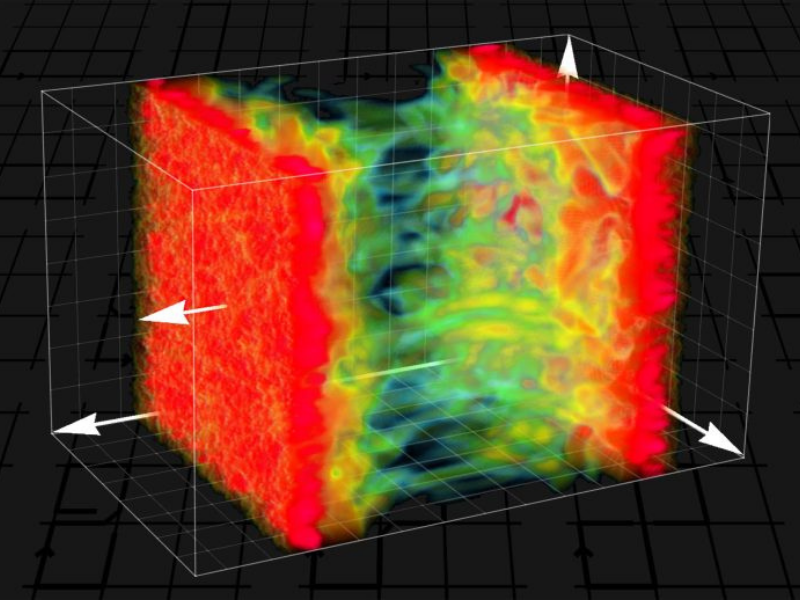

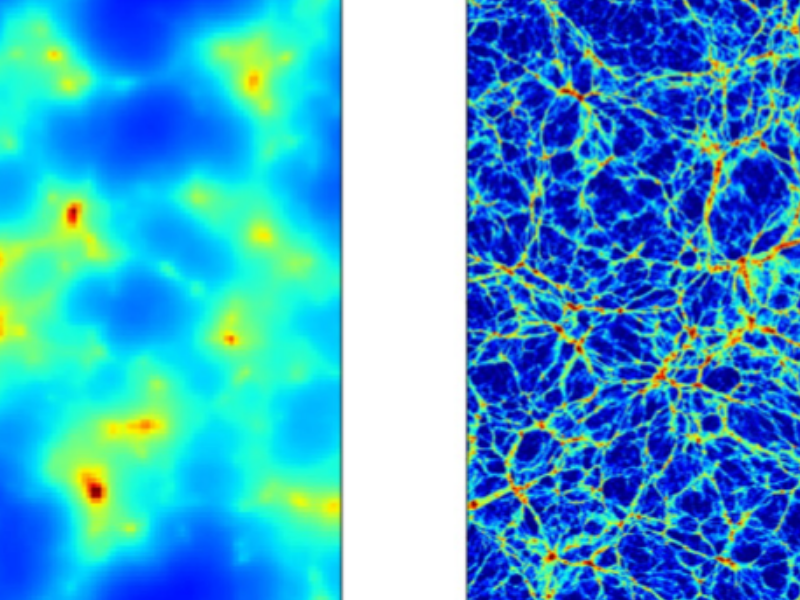

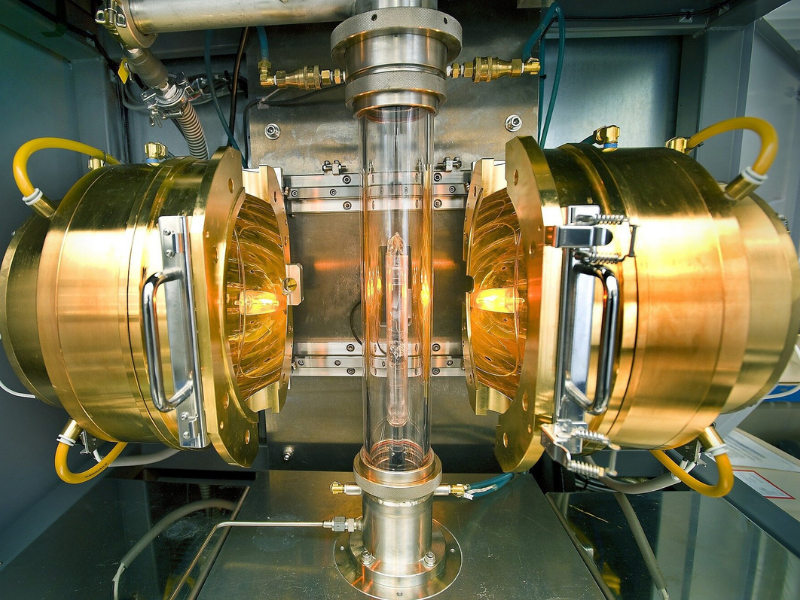

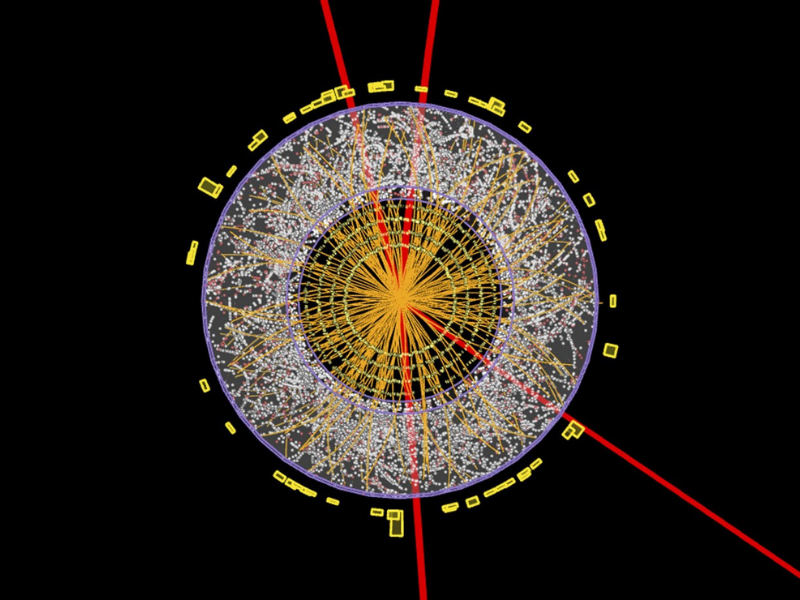

It can hardly be more complicated. Little particles whir around wildly with extremely high energy, many interactions happen in the matted mess of quantum particles. This leads to a state of matter called “quark-gluon plasma”. Promptly after the Big Bang, the entire universe found itself in this state. Today, it is generated by high-energy atomic nucleus collisions, as an example at CERN.

Such processes can just be examined using high-performance computers and very complicated computer simulations whose outcomes are difficult to assess. For that reason, utilizing artificial intelligence or machine learning for this goal looks like an obvious idea. Average machine-learning algorithms, however, are not ideal for this task. The mathematical properties of particle physics call for a very special structure of neural networks. At TU Wien (Vienna), it has now been demonstrated how neural networks can be effectively used for these difficult tasks in particle physics.

Neural networks

” Simulating a quark-gluon plasma as realistically as possible calls for an extremely big quantity of computing time,” claims Dr. Andreas Ipp from the Institute for Theoretical Physics at TU Wien. “Even the largest supercomputers on the planet are bewildered by this”. Consequently, it would be preferable not to calculate every detail precisely but to identify and predict specific plasma properties using artificial intelligence.

Therefore, neural networks are used, similar to those utilized for image recognition. Artificial “neurons” are linked together on the computer similarly to neurons in the brain. This produces a network that can identify, as an example, whether a cat is evident in a specific image.

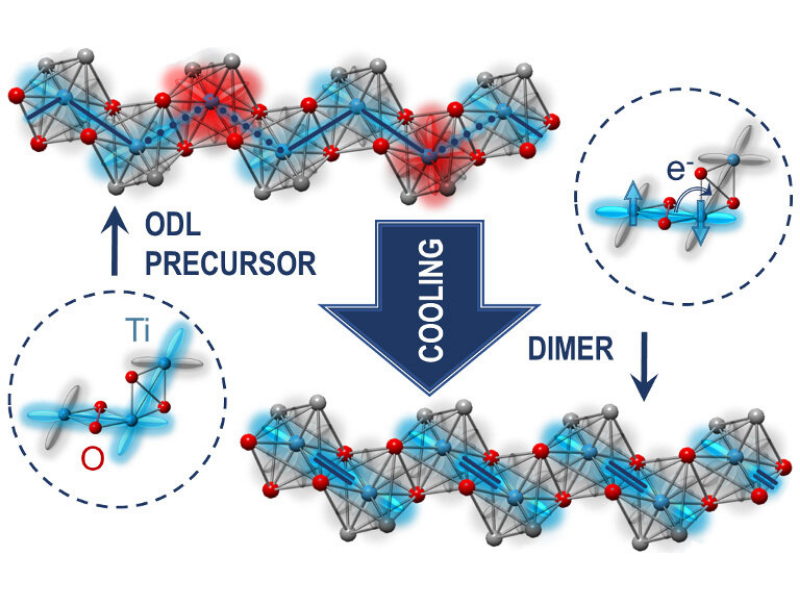

However, there is a significant issue when employing this technique to the quark-gluon plasma. The quantum fields utilized to mathematically explain the particles, as well as the forces in between them, can be represented in various different ways. “This is described as gauge symmetries,” states Ipp. “The fundamental principle behind this is something we are acquainted with. If I adjust a measuring device differently, for example, if I utilize the Kelvin scale instead of the Celsius scale for my thermometer, I obtain entirely different numbers, even though I am describing the very same physical state. It is comparable with quantum theories– other than that, and the allowed changes are mathematically much more complex.” Mathematical objects that look totally different at first glimpse might depict the very same physical state.

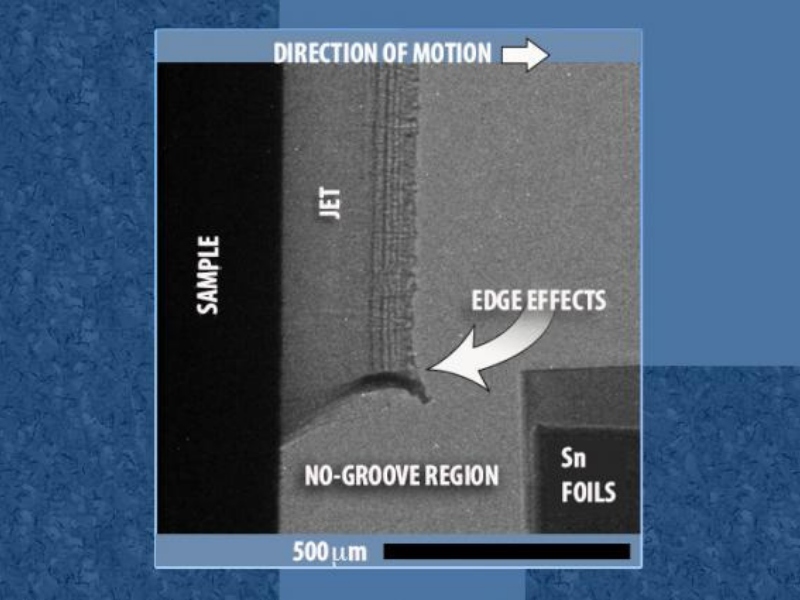

Gauge symmetries developed into the structure of the network

” If you do not take these gauge symmetries into account, you can not meaningfully interpret the results of the computer simulations,” claims Dr. David I. Müller. “Teaching a neural network to find out these gauge symmetries by itself would certainly be incredibly hard. It is better to begin by designing the structure of the neural network as though the gauge symmetry is immediately considered. This ensures that different depictions of the exact same physical state additionally create the exact same signals in the neural network,” says Müller. “That is exactly what we have actually now prospered in doing. We have actually established totally new network layers that immediately take gauge invariance into account.” In some examination applications, it was revealed that these networks could, in fact, learn better exactly how to manage the simulation data of the quark-gluon plasma.

” With such neural networks, it becomes feasible to make predictions about the system– for example, to estimate what the quark-gluon plasma will appear like at a later moment without actually having to calculate every intermediate step in time in detail,” says Andreas Ipp. “And at the same time, it is guaranteed that the system just produces results that do not oppose gauge symmetry– in other words, outcomes which make sense a minimum of in concept.”

It will be a long time before it is possible to replicate atomic core collisions at CERN with such methods fully. Yet, the new type of neural networks offers a promising as well as totally new device for describing physical phenomena for which all other computational techniques may never ever be effective enough.

Read the original article on Scitech Daily.

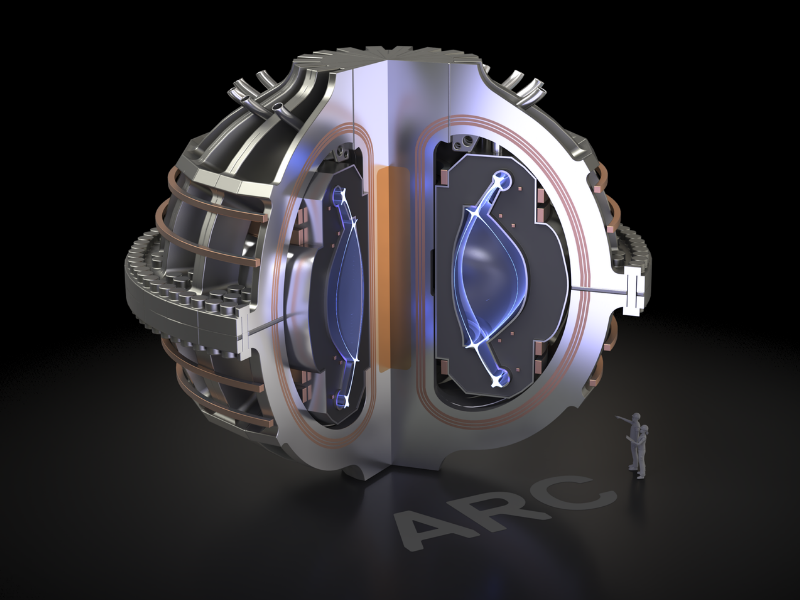

Related “MIT Magnet Allows Path to Commercial Fusion Power”