University of Houston engineers developed a breakthrough thin-film material that boosts AI speed while cutting energy use.

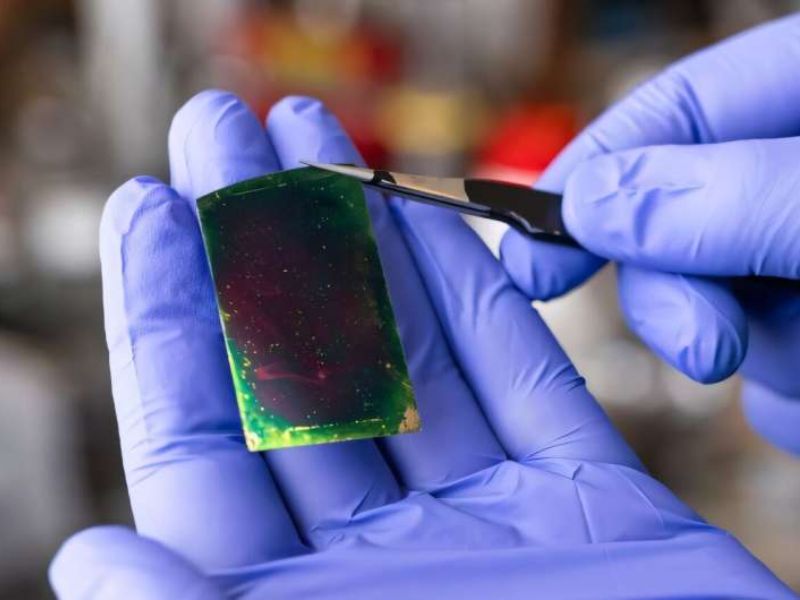

Published in ACS Nano, the study introduces a 2D dielectric thin film that replaces heat-generating chip components, reducing energy use and AI-related heat.

“AI has driven our energy needs sky-high,” said Alamgir Karim, Dow Chair and Welch Foundation Professor. Brookshire Department of Chemical and Biomolecular Engineering at UH.

Massive Cooling Systems Drive Up Power Use in AI Data Centers

He noted that AI data centers rely on power-hungry cooling systems to keep thousands of servers fast and extend chip lifespans.

To reduce power consumption while boosting performance, Karim and his former Ph.D. student, Maninderjeet Singh, used Nobel Prize–winning organic framework materials to create these dielectric films.

“These next-generation materials could greatly boost AI and electronic device performance,” said Singh, who developed them at UH with Professor Shaffer and Ph.D. student Schroeder.

Dielectric materials differ in how much electrical energy they store. High-permittivity (high-k) dielectrics hold more charge and therefore generate more heat, while low-k materials store less and stay cooler. Karim focused on lightweight, carbon-based low-k dielectrics that speed signal transmission and reduce delays.

“Low-k materials insulate circuits, allowing faster, cooler, and more efficient chip performance,” Karim explained.

New Carbon-Based Thin Films Engineered and Analyzed for Next-Generation Low-k Electronics

The researchers made porous, sheet-like carbon films and, with student Saurabh Tiwary, analyzed their electronic properties for low-k devices.

Karim and Singh found that integrating low-k 2D films into chips could greatly reduce AI data centers’ power use, thanks to their ultralow dielectric constant, high breakdown strength, and strong thermal stability.

Shaffer and Schroeder used synthetic interfacial polymerization to form strong, layered crystalline films from molecules in two immiscible liquids.. This approach was pioneered by 2025 Chemistry Nobel laureate Omar M. Yaghi of UC Berkeley and his collaborators.

Read the original article on: Techxplore

Read more: Samsung Introduces its First Special Edition Triple-Fold Smartphone