Teaching robots new skills once demanded coding expertise, but a new wave of robots may soon learn from nearly anyone. Engineers are creating robotic assistants that can “learn from demonstration,” a more intuitive training method where a person guides the robot through a task in one of three ways: by using a remote control like a joystick, physically moving the robot, or performing the task while the robot observes and imitates.

Typically, learning-by-demonstration robots rely on just one of these training modes. However, MIT engineers have introduced a versatile, three-in-one training interface that enables robots to learn using any of the three methods.

A Flexible, Hands-On Tool for Teaching Robots Any Task

This innovative tool is a handheld, sensor-equipped device that attaches to standard collaborative robotic arms. It lets users teach a robot by remotely controlling it, guiding it manually, or demonstrating the task themselves—whichever approach is most convenient or effective for the situation.

The MIT team evaluated their new device, dubbed a “versatile demonstration interface,” using a standard collaborative robotic arm. They enlisted volunteers with manufacturing experience to use the interface to carry out two hands-on tasks commonly performed in industrial settings.

Researchers say the new interface offers greater training flexibility, allowing more people to teach robots. It could also allow robots to acquire a more diverse set of skills. For example, one person could remotely train a robot to handle hazardous materials, another could guide it through packing by hand, and a third could demonstrate logo drawing for the robot to learn.

“Our aim is to build smart, skilled robotic teammates that work seamlessly with humans on complex tasks,” says Mike Hagenow, postdoctoral researcher at MIT. “Flexible training tools like this could be just as useful in homes or caregiving settings—not just on factory floors. Because hey, who wouldn’t want a robot that can fold laundry and understand nuance?”

Hagenow is set to present a paper on the new interface at the IEEE Intelligent Robots and Systems (IROS) conference this October. The paper is also available on the arXiv preprint server.

Collaborative Effort by Experts Across MIT Departments

The research was co-authored by MIT colleagues Dimosthenis Kontogiorgos, a postdoc at the Computer Science and Artificial Intelligence Lab (CSAIL); Yanwei Wang, who recently completed a Ph.D. in electrical engineering and computer science; and Julie Shah, professor and head of the Department of Aeronautics and Astronautics.

At MIT, Julie Shah’s research group focuses on designing robots that can collaborate with humans in various environments—including workplaces, hospitals, and homes. A core aspect of her work is creating systems that allow people to teach robots new tasks or skills in real time, directly within their working environments.

For example, such systems could let a factory worker make quick, intuitive adjustments to a robot’s movements on the spot—without needing to halt operations and reprogram its software, a task that may require expertise the worker doesn’t have.

This latest project builds on a growing robot learning approach known as “learning from demonstration” (LfD), which emphasizes training robots through more natural and human-friendly interactions.

Three Core Approaches to Robot Training Identified

As they reviewed existing LfD research, Shah and postdoc Mike Hagenow identified three primary training approaches: teleoperation, kinesthetic teaching, and natural demonstration. Each method has strengths that may suit different users or tasks.

This led them to ask whether a single tool could combine all three approaches, making it easier for a broader range of people to teach robots a wider array of tasks.

“If we can integrate these three ways of interacting with a robot, we might unlock new possibilities for both people and applications,” Hagenow explains.

With this goal in mind, the team developed a new tool called the Versatile Demonstration Interface (VDI). This handheld device mounts to a standard collaborative robot arm and includes a camera, tracking markers, and force sensors to monitor movement and applied pressure.

Once attached to the robot, the VDI allows for full remote control of the robot, with the onboard camera capturing its movements as training data the robot can later use to learn the task independently. Alternatively, a person can physically guide the robot through a task with the VDI in place.

Detachable Design Enables Robots to Learn by Watching Human Demonstrations

The VDI can also be removed from the robot and used independently by a person to perform the task manually. As the user performs the task, the camera records the motion, and once the VDI is reattached, the robot can replicate the actions based on what it observed.

To evaluate the device’s ease of use, the researchers took the VDI and a robotic arm to a local innovation center where manufacturing professionals explore technologies that can enhance factory operations.

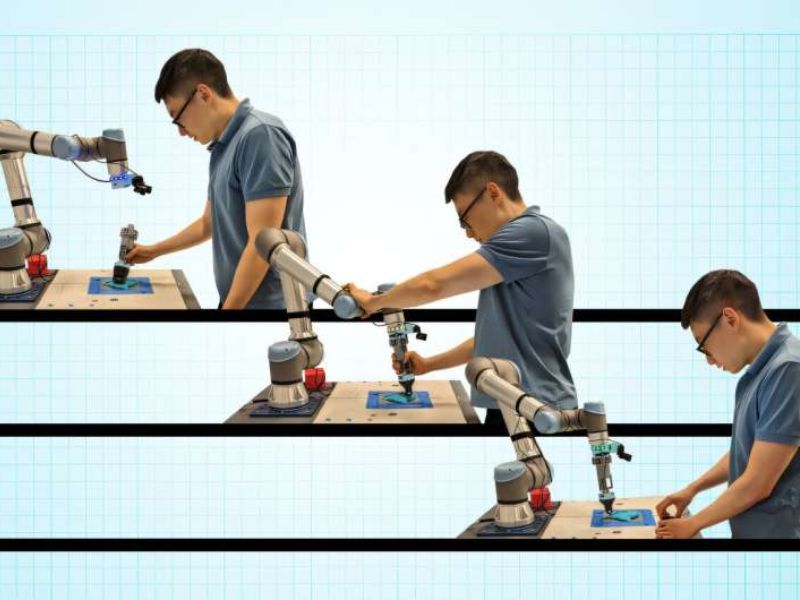

They conducted an experiment where volunteers used the VDI in all three training modes to teach the robot two standard manufacturing tasks: press-fitting and molding. In the press-fitting task, participants trained the robot to press pegs into holes—representing a common fastening procedure on factory floors.

In the molding task, a volunteer trained the robot to press and roll a soft, dough-like material evenly around a central rod—a process similar to certain thermomolding techniques.

Testing All Three Training Modes on Real-World Manufacturing Tasks

Each participant performed both the press-fitting and molding tasks using all three training methods: joystick teleoperation, physical guidance, and direct demonstration with the detached VDI. During the final method, the robot recorded the interface’s force and movement data for learning purposes.

The researchers observed that volunteers generally favored the natural demonstration method over teleoperation and kinesthetic guidance. However, the manufacturing professionals also identified use cases where each approach could be particularly effective. For example, teleoperation may be ideal when training a robot to manage dangerous or toxic materials.

Kinesthetic teaching could be advantageous when guiding robots in tasks involving large or heavy items that require precise repositioning. Natural demonstration, on the other hand, is especially useful for showing tasks that demand precision and delicate handling.

“We envision this demonstration interface being used in dynamic manufacturing settings, where a single robot might assist with a variety of tasks—each better taught through a different method,” says Hagenow. He plans to refine the interface based on user feedback and use the updated version in future robot training experiments.

“This study shows that we can make collaborative robots more adaptable by designing interfaces that expand how end-users interact with them during the teaching process,” he adds.

Read the original article on: Techxplore

Read more:Can AI Think Like Humans? New Research Reveals Behavior-Predicting Model