John Hopfield and Geoffrey Hinton, two pioneers of artificial intelligence, were awarded the Nobel Prize in Physics on Tuesday for their contributions to the foundation of machine learning, which is transforming how we live and work, while also posing new risks to humanity.

Geoffrey Hinton, known as the godfather of artificial intelligence, is a dual citizen of Canada and Britain and works at the University of Toronto, while John Hopfield, an American, is based at Princeton.

“These two gentlemen were the true pioneers,” said Nobel physics committee member Mark Pearce. According to Ellen Moons of the Nobel committee, the researchers’ work on artificial neural networks—computer systems modeled after the brain’s neurons—has become central to science, medicine, and everyday life.

The Lasting Impact of Early AI Research and Its Future Potential

Hopfield, whose 1982 research laid the foundation for Hinton’s work, told the Associated Press that he is continually amazed by its impact. Hinton, in a press call with the Royal Swedish Academy of Sciences, predicted AI will have a “huge influence” on civilization, improving productivity and health care, comparing its potential to the Industrial Revolution.

“Instead of surpassing humans in physical strength, AI will surpass us in intellectual ability,” Hinton said, noting both the exciting possibilities and the need for caution regarding the potential risks, especially the threat of AI becoming uncontrollable.

Balancing AI’s Promise with Ethical Concerns

The Nobel committee also acknowledged concerns about the potential downsides of AI. Ellen Moons noted that while AI offers “enormous benefits,” its rapid advancement has sparked worries about humanity’s future. She emphasized that it is humanity’s collective responsibility to use this technology safely and ethically for the greatest good.

Geoffrey Hinton, who left his role at Google to speak more openly about the risks of AI, shares these concerns. “I worry that this could lead to systems more intelligent than us eventually taking control,” he said.

In fact, John Hopfield, who signed early petitions urging strong regulation of AI, likened the risks and benefits of the technology to those of viruses and nuclear energy, both of which can benefit or harm society.

Hopfield, who was staying with his wife at a cottage in Hampshire, England, said he was met with a flood of emails after grabbing a coffee and getting his flu shot.

“I’ve never seen that many emails in my life,” he remarked. He mentioned that a bottle of champagne and a bowl of soup were ready, but doubted there were any other physicists in the area to celebrate with him.

Hinton expressed surprise at receiving the honor.

“I’m flabbergasted. I never expected this,” he said when contacted by the Nobel committee. He mentioned he was staying in a budget hotel without internet access.

In the 1980s, Hinton, now 76, pioneered a technique called backpropagation, which is crucial in teaching machines to “learn” by adjusting errors until they disappear. This method resembles how a student improves by correcting mistakes in repeated attempts until the solution aligns with the system’s version of reality.

The Unique Path of a Pioneering AI Scientist

Nick Frosst, Hinton’s former protégé and first hire at Google’s AI division in Toronto, noted that Hinton had an unconventional background as a psychologist who also dabbled in carpentry and was deeply curious about the workings of the mind. Frosst said, “His playfulness and genuine curiosity in addressing fundamental questions are key to his success as a scientist.”

Hinton didn’t stop with his pioneering 1980s work.

“He’s always trying bold ideas—some succeed, some don’t—but all have advanced the field,” said Nick Frosst.

A Pivotal Breakthrough in AI and the Legacy of Perseverance

Moreover, in 2012, Hinton’s team won the ImageNet competition with a neural network, sparking widespread imitation. Fei-Fei Li called it “a pivotal moment in AI history.” Hinton, along with Yoshua Bengio and Yann LeCun, received the Turing Award in 2019. Reflecting on early doubts about his work, Hinton advised young researchers, “Don’t be discouraged if others call your work silly.”

Many of his students entered the tech industry, founding companies like Cohere and OpenAI. Hinton regularly uses AI tools and said, “I ask GPT-4 for answers—while it can hallucinate, it’s still a useful expert.”

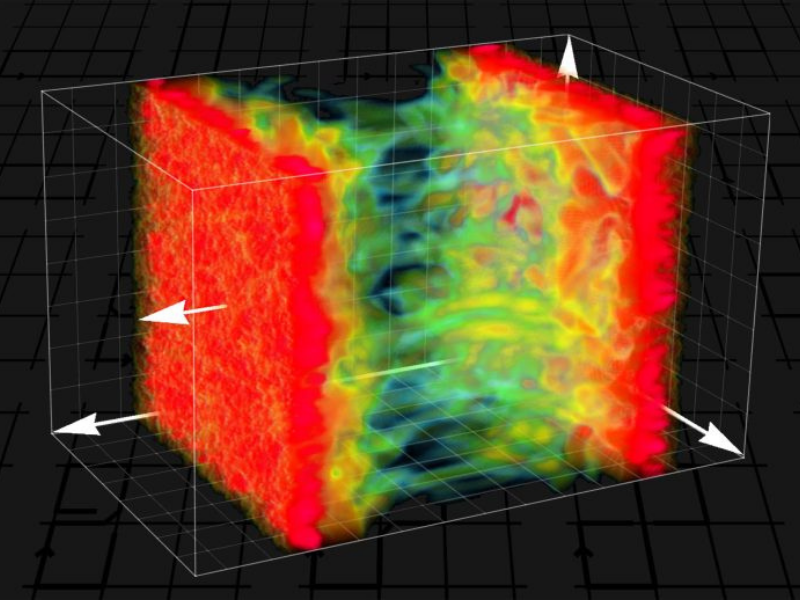

Hopfield, 91, created an associative memory that can store and reconstruct images and data patterns, as noted by the Nobel committee.

“What fascinates me most is how mind arises from machine,” Hopfield said in a 2019 video after receiving a physics prize.

Hinton expanded on Hopfield’s network with the Boltzmann machine, which the committee stated can learn to identify key features in data.

AI’s Recognition in Traditional Science and Interdisciplinary Innovation

Although there’s no Nobel for computer science, Fei-Fei Li pointed out that awarding a traditional science prize to AI pioneers shows the merging of disciplines. Bengio, who was mentored by Hinton and influenced by Hopfield, remarked that both winners “saw a significant, non-obvious connection between physics and learning in neural networks, forming the basis of modern AI.”

Not all of Hinton’s colleagues agree with his views on the risks of the technology he helped create.

Frosst has had many “spirited debates” with Hinton about AI risks and disagrees with some of his concerns, though he values Hinton’s openness. “We mainly differ on the timeline and specific technologies,” Frosst said. “I don’t think neural networks and language models currently pose an existential threat.“

Bengio, who has been vocal about AI risks, shares concerns with Hinton about the “loss of human control” and the morality of AI systems surpassing human intelligence. “We don’t have answers to these questions,” he said, “and we should ensure we do before building these machines.”

When asked if the Nobel committee considered Hinton’s warnings, Bengio dismissed the notion, saying, “We’re discussing early work when we thought everything would be fine.”

To conclude, the Nobel announcements began Monday with Victor Ambros and Gary Ruvkun winning the medicine prize. However, the chemistry prize will be announced Wednesday, literature on Thursday, the Nobel Peace Prize on Friday, and the economics award on October 14.

The prize includes 11 million Swedish kronor (about $1 million) from a bequest by Alfred Nobel. The laureates will receive their awards on December 10, the anniversary of Nobel’s death.

Read the original article on: Phys Org

Read more: 3 Scientists Share Nobel Prize In Physics For Work In Quantum Mechanics