A smart ring aims to turn voice into an interface and thoughts into actions, marking the dawn of conversational wearables and reshaping how we engage with AI.

For decades, our interaction with technology has revolved around screens, keyboards, and touch. Now, a new generation of devices is reimagining that relationship—where speech, gesture, and presence emerge as the next interfaces.

Following pendants, bracelets, and smart cards, a minimalist concept takes shape: a ring that listens, processes, and understands.

Stream: A Mouse for Your Voice by Sandbar

Developed by Sandbar, a startup founded by two former Meta interface designers, the Stream is described as “a mouse for your voice.” Compact, lightweight, and intuitive, it lets users record thoughts, chat with an AI assistant, and control music through subtle gestures.

Sandbar CEO Mina Fahmi told TechCrunch that the idea grew out of personal frustration. While testing large language models, she found her always-connected smartphone hindered capturing spontaneous ideas.

The outcome is a piece of conversational hardware: the Stream’s microphone activates only when the user touches the ring’s pad, offering full privacy and control. It’s sensitive enough to detect even a whisper, with all recordings automatically transcribed and neatly organized in an AI-powered app.

Users can revisit past conversations and ideas through an interactive timeline and even personalize the assistant’s voice to mirror their own.

The Minds Behind Sandbar

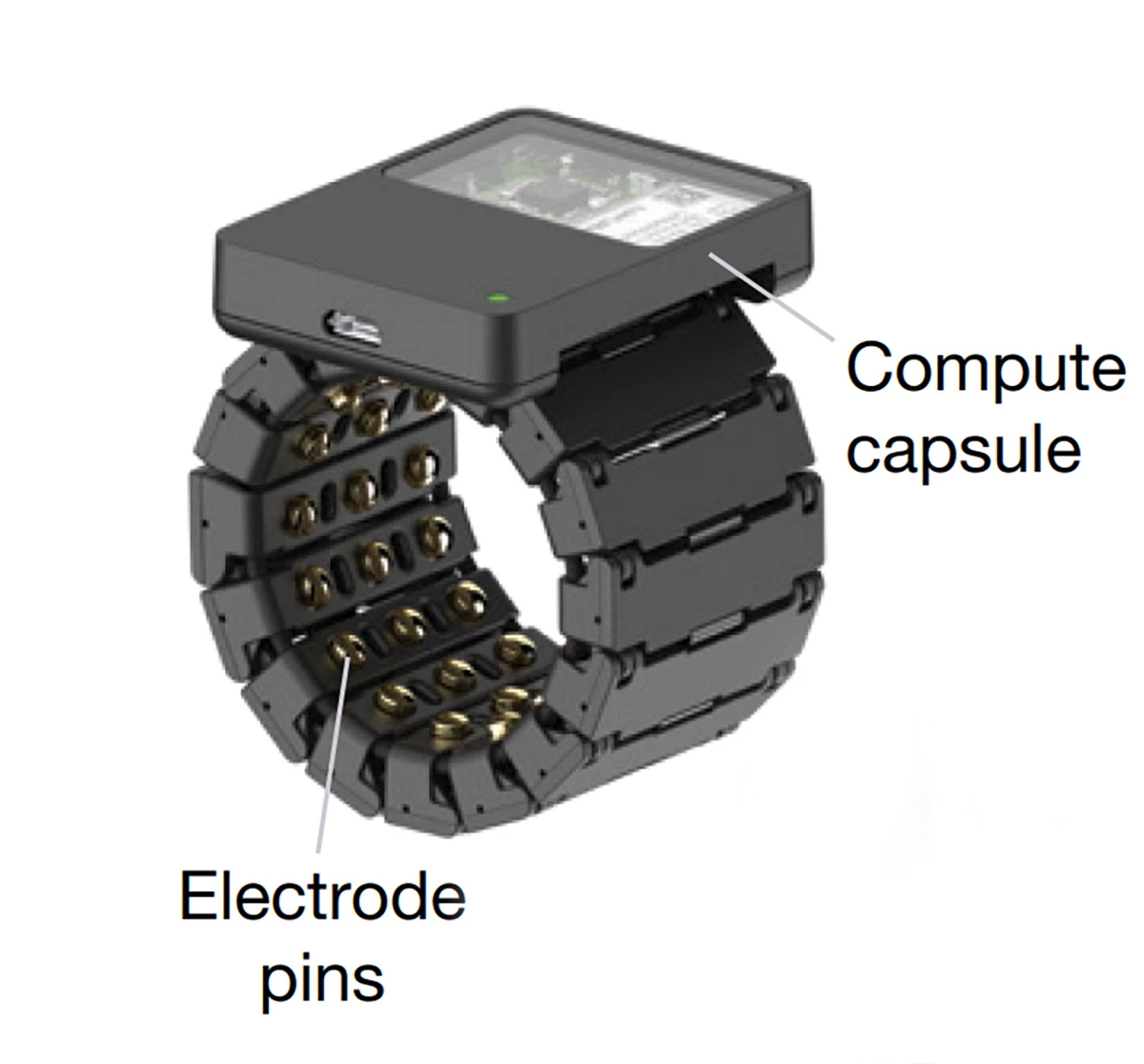

Sandbar, founded by Mina Fahmi and Kirak Hong—a former Google engineer and CTRL-Labs expert—draws on their human-computer interaction experience to make Stream seamlessly bridge thought and action.

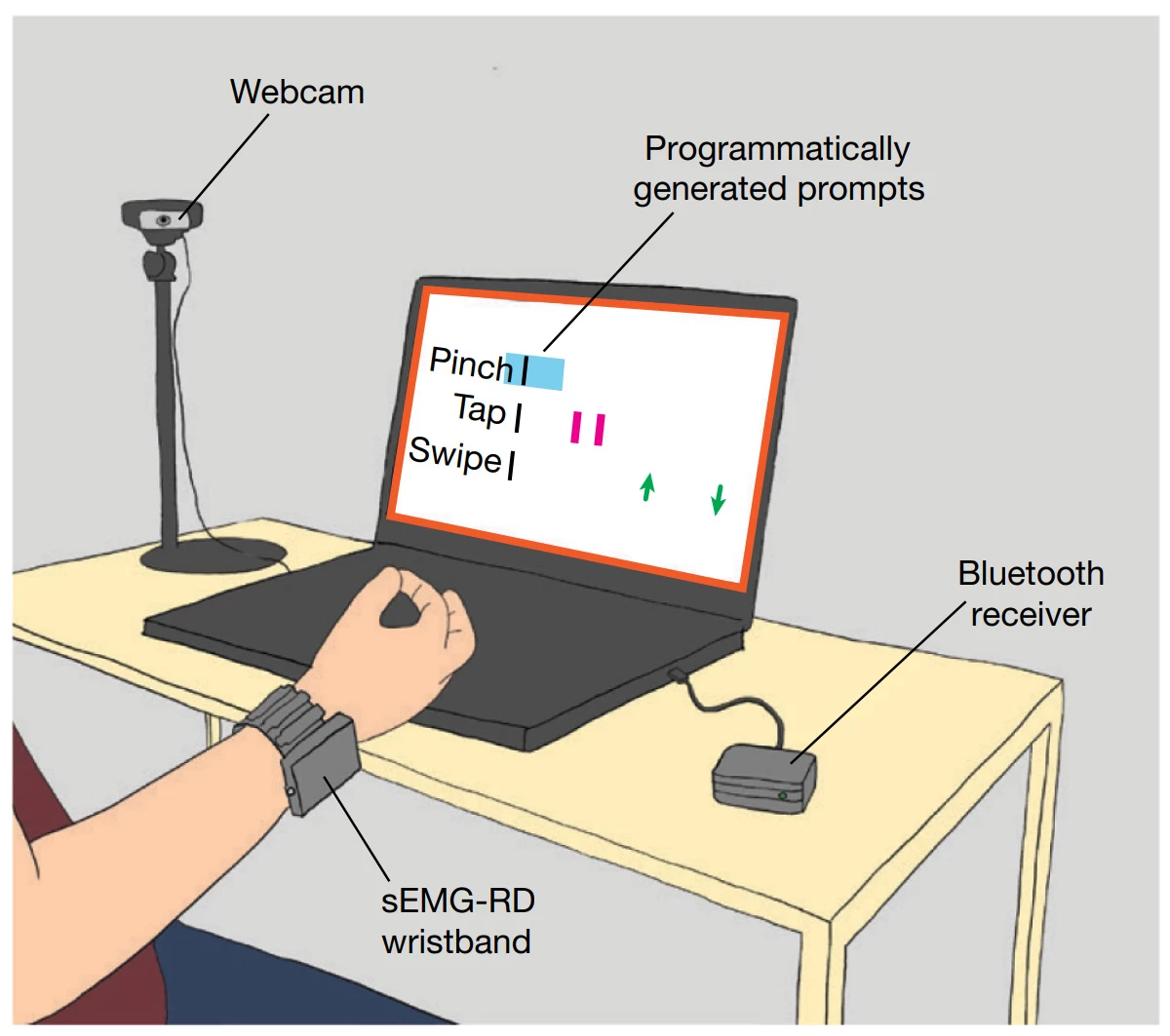

The device also supports media controls, recognizing gestures like swipes and taps to play, pause, or adjust volume—handy for on-the-go use without ever pulling out your phone.

The Stream enters a competitive market with rivals like Friend, Limitless, Bee, and Taya, all exploring AI wearables. What sets Sandbar apart is its minimalist vision and emphasis on idea capture rather than building a “digital companion.”

Pricing and Availability

The device is now available for pre-order, priced at US$249 for the silver version and US$299 for the gold, with deliveries planned for next summer in the Northern Hemisphere. No launch date has been announced for Brazil yet.

Stream represents an exploration into the future of human-AI interaction. If smartphones are the interface of the internet and smartwatches of the body, voice wearables could become the interface of the mind, linking thought and action in a single gesture.

While the spotlight remains on the race for chips and AI models, the next frontier may unfold quietly. The real question is: whoever perfects the design of the human-machine experience—will they define the next era of AI?

Read the original article on: Startse

Read more: Study Shows Horror Movies May Reduce Stress and Enhance Well-Being