A Japanese tech startup has created a device that can transmit a person’s full-body movements and physical force.

H2L’s Capsule Interface enables immersive shared experiences between humans, robots, and avatars, expanding remote interaction possibilities.

Resembling a massage chair, the system turns the user’s body into a control interface that can operate a humanoid robot. The Tokyo-based company demonstrated its capabilities in a short video.

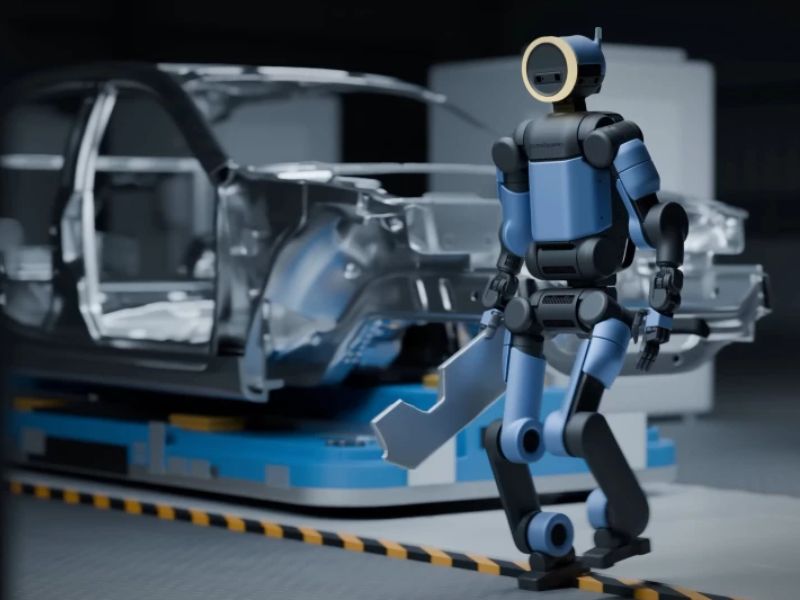

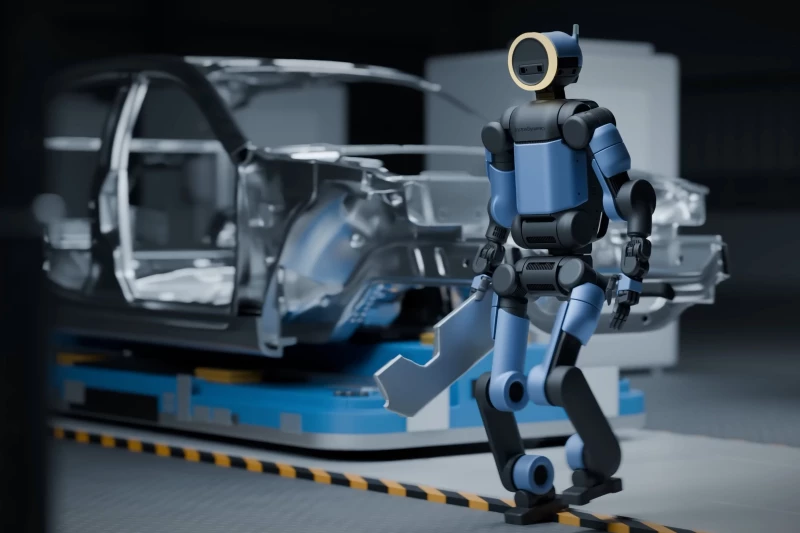

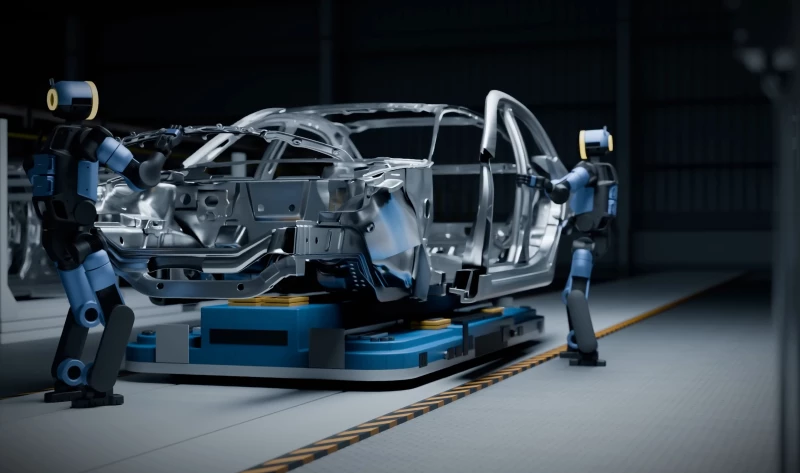

Real-Time Human Motion Replication in Humanoid Robots

In May 2025, researchers from Stanford University and Simon Fraser University introduced TWIST, an AI system that allows humanoid robots to accurately replicate human movements in real time.

In a video released by H2L, a woman remotely operates a humanoid robot from Unitree Robotics using the Capsule Interface system.

The robot performs tasks such as cleaning, lifting a box, and interacting with another person, demonstrating the system’s ability to transmit precise body movements and physical force.

Capturing Intent and Force Through Muscle Sensors

The Capsule Interface uses advanced muscle-displacement sensors that detect even the slightest changes in muscle tension. The technology captures a user’s intent and force by tracking subtle muscle movements in real time.

This differs from traditional teleoperation systems, which typically use motion sensors—such as IMUs, exoskeletons, or optical trackers—to replicate a user’s movements.

H2L argues that motion data alone cannot capture the subtle details required for realistic physical and emotional interaction. While syncing visuals and positions can create a basic sense of control, it does not reproduce the forces applied or the effort felt during the action.

The Capsule Interface maps real-time muscle activity to robots, enhancing force awareness, haptic realism, and embodiment.

Replicating Movement and Effort for Enhanced Immersion

This muscle-focused method enables robots to replicate not only a user’s movements but also the intensity behind them. For example, when lifting a heavy object, the robot reflects the level of effort the user exerts. This feedback enhances immersion and empathy by conveying both motion and force.

H2L envisions teleoperation as more than simple imitation—turning it into a deeply shared, physical experience.

The Capsule Interface marks a new step in remote interaction, allowing users to transmit full-body movements and physical force to robots or avatars while sitting or lying down.

Equipped with speakers, a display, and muscle-displacement sensors, the device detects subtle muscle movements to relay a user’s intent and effort in real time.

Seamless, Low-Effort Integration into Everyday Furniture

H2L says the Capsule Interface provides a low-effort, natural experience that fits into beds or chairs, unlike complex traditional systems.

According to the company, the technology has many potential uses. In business settings, people could attend meetings or complete tasks in distant locations by remotely operating humanoid robots from home or nearby offices.

It could also allow delivery workers to lift and transport items remotely, reducing physical strain, and enable safe robot operation in dangerous environments such as disaster zones.

The approach could support dual-income households, assist older adults, and help with everyday chores such as cooking and cleaning. Farmers could also remotely control agricultural robots and share expertise, helping reduce labor demands.

The interface may also enable more immersive avatar communication in virtual environments, opening possibilities in healthcare, entertainment, and education. In the future, H2L plans to add proprioceptive feedback to enhance realism and expand shared experiences between humans and machines.

Read the original article on: Interestingengineering

Read more:Chinese Robot sets new Milestone by Walking more than 100 km