To operate effectively in dynamic, unstructured settings, robots require AI trained on diverse sensory data. Microsoft Corp. today introduced Rho-alpha (ρα), the first robotics-focused model derived from its Phi family of vision-language models.

Rho-Alpha Boosts Robot Autonomy with VLA Capabilities

According to Microsoft, vision-language-action (VLA) models allow physical AI systems to sense, reason, and act with growing autonomy. The Phi-based models are designed to improve robot adaptability and reliability.

“Rho-alpha turns natural language commands into control signals for bimanual robots,” wrote Ashley Llorens, Microsoft Research Accelerator VP. “It goes beyond standard VLA models by incorporating a broader range of perceptual and learning modalities.”

Rho-alpha adds tactile input and is exploring force sensing, Microsoft noted. From a learning perspective, the company said Rho-alpha can continuously refine its performance through human feedback.

In the video below, Rho-alpha engages with the BusyBox, a physical interaction benchmark recently introduced by Microsoft Research, following natural language prompts.

Rho-Alpha is Trained on Simulations, Demonstrations, and Web Data

Llorens wrote that Rho-alpha trains for tactile perception using real demonstrations, simulations, and web-scale visual Q&A data. He added Microsoft plans to extend the model to more sensing modalities for real-world applications.

Microsoft also acknowledged a shortage of scalable training data for robotics, particularly for tactile and other less common sensing inputs. Using the open NVIDIA Isaac Sim framework, researchers can produce synthetic data through a multistage reinforcement learning–based process.

“Although teleoperated data collection has become common in robotics, it is not always feasible or possible,” said Abhishek Gupta, assistant professor at the University of Washington. “We are collaborating with Microsoft Research to augment physical robot datasets with diverse synthetic demonstrations generated through simulation and reinforcement learning.”

“Building foundation models capable of reasoning and action means addressing the limited availability of diverse real-world data,” said Deepu Talla, vice president of robotics and edge AI at NVIDIA. “By using NVIDIA Isaac Sim on Azure to create physically realistic synthetic datasets, Microsoft Research is speeding up the development of flexible models like Rho-alpha that can handle complex manipulation tasks.”

Humans Fine-Tune Microsoft’s Models with Feedback

Microsoft noted that even with enhanced perception, robots can still err during tasks. The company explained that corrective input from teleoperation tools, like a 3D mouse, allows Rho-alpha to keep learning.

In the video below, Microsoft demonstrates two UR5e cobot arms equipped with tactile sensors using Rho-alpha to insert a plug. The right arm struggles with the task and receives real-time human guidance to succeed.

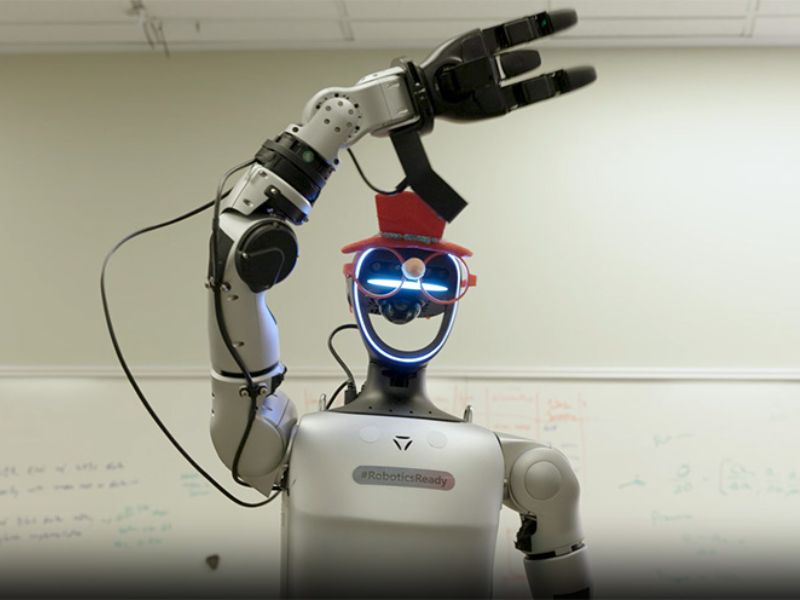

“Our team is optimizing Rho-alpha’s training and dataset for better bimanual task performance,” said Llorens. “Researchers are testing the model on dual-arm and humanoid robots, with a technical paper coming soon.”

Microsoft added that it aims to collaborate with robotics manufacturers, integrators, and end users to explore how Rho-alpha and its tools can help train, deploy, and continuously refine cloud-hosted physical AI using their own data. The company invited interested parties to join its Research Early Access Program.

Read the original article on: The Robot Report

Read more: Engineers Build Intelligent Robodog Equipped with AI