What happens when you merge Apple’s Siri and Amazon’s Alexa with Boston Dynamics’ four-legged robots? The result is “Astro,” an intelligent, hearing-and-seeing robodog.

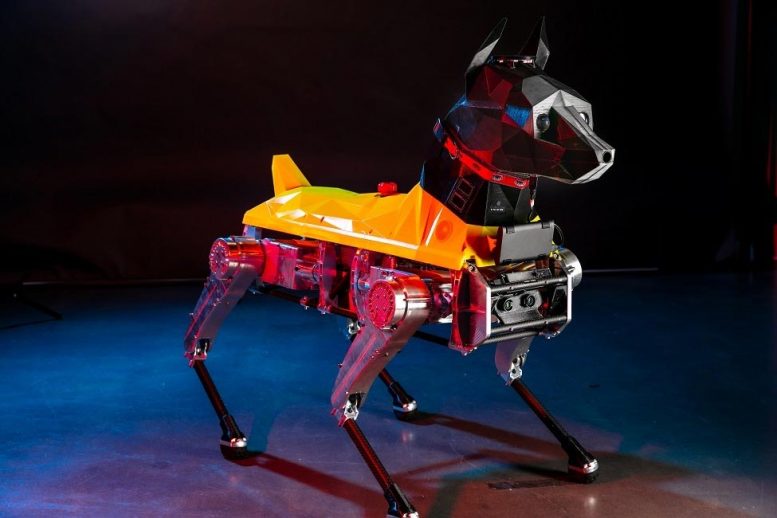

Researchers at Florida Atlantic University’s Machine Perception and Cognitive Robotics Laboratory (MPCR), part of the Center for Complex Systems and Brain Sciences in FAU’s Charles E. Schmidt College of Science, are bringing one of the world’s few quadruped robots to life. Astro stands out as the only one with a 3D-printed Doberman-like head housing a computerized brain.

Learning Through Experience

Astro doesn’t just resemble a dog—he learns like one as well. Unlike preprogrammed robots, Astro learns via a deep neural network simulating a brain. This lets him learn from experience and perform practical, “dog-like” tasks.

Equipped with sensors, cameras, radar, and a directional mic, this 100-pound robot is still a “puppy-in-training.” Like a real dog, he can follow basic commands such as “sit,” “stand,” and “lie down.” Over time, Astro is expected to read hand signals, recognize colors and faces, understand multiple languages, work with drones, and identify other dogs.

Astro on Duty

Astro’s main role is scouting for guns, explosives, and gun residue to aid law enforcement and security. He can also be programmed to serve as a guide dog or monitor health. The MPCR team is also training him for search-and-rescue missions, such as hurricane reconnaissance, as well as military operations.

Astro can navigate rough terrain and respond in real time to keep humans and animals safe. Astro has over a dozen sensors—optical, auditory, chemical, and radar—and uses Nvidia Jetson TX2 GPUs, performing four trillion calculations per second, to process data and act autonomously. This lets him scan thousands of faces, detect airborne substances, and hear distress signals beyond human range. FAU’s MPCR team will give him a vast experience database to make quick, informed decisions.

The Minds Behind Astro: A Multidisciplinary Team at FAU

Astro’s team includes neuroscientists, IT specialists, artists, biologists, psychologists, and FAU students from high school to graduate level. Leading the project are Elan Barenholtz, Ph.D., associate professor of psychology, co-director of FAU’s MPCR laboratory, and member of FAU’s Brain Institute (I-BRAIN); William Hahn, Ph.D., assistant professor of mathematical sciences and co-director of the MPCR lab; and Pedram Nimreezi, director of intelligent software at the MPCR lab, CTO of RedGage, and martial arts expert.

“Our MPCR team was chosen by Drone Data’s Astro Robotics group for their deep expertise in cognitive neuroscience, including behavioral, neurophysiological, and computational approaches to studying the brain,” said Ata Sarajedini, Ph.D., dean of FAU’s Charles E. Schmidt College of Science. “Astro is modeled on the human brain and brought to life through machine learning and artificial intelligence, offering an invaluable tool for tackling some of the world’s most complex challenges.”

Read the original article on: SciTechDaily

Read more: A New Artificial Skin Aims to Give Humanoid Robots the Sensation of Pain