Giving a smart device to a tween can feel risky for parents, given the many online threats. To address this, kid-friendly tech company Pinwheel has introduced a new option for families who want to stay connected without resorting to a smartphone.

A Safe, AI-Powered Smartwatch for Kids Hits the Market

Pinwheel Watch just released a smartwatch designed for kids ages 7 to 14. It offers a safe alternative with no access to the internet or social media. Features include parental controls, GPS tracking, a camera, voice-to-text messaging, mini-games, and — unexpectedly — an AI chatbot.

The smartwatch sports a sleek black look and a display slightly larger than an Apple Watch. “Pinwheel sells it for $160, plus a $15 monthly subscription.” It became available on Pinwheel.com last week, and we’ve been testing it over the past few days.

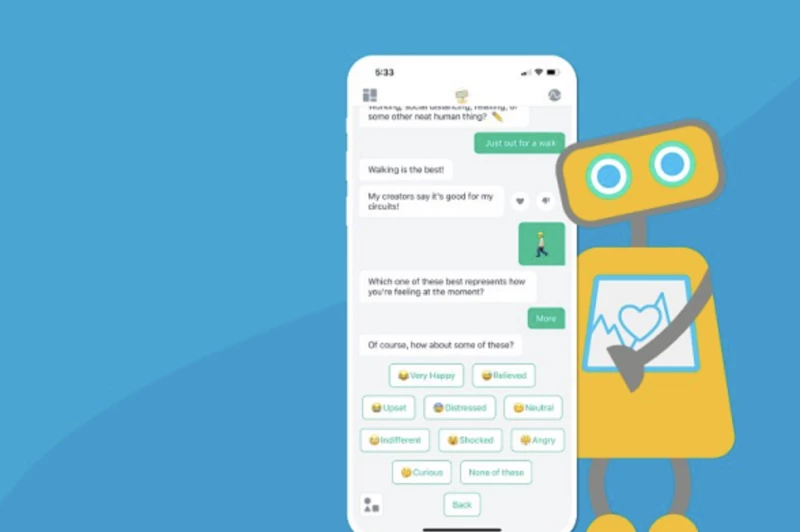

While the device includes standard parental controls, one feature that may raise eyebrows is its built-in AI assistant, “PinwheelGPT.”

According to the company, PinwheelGPT offers a safer alternative to typical AI chatbots, allowing kids to ask about topics ranging from daily curiosities to social situations and homework help.

Still, some parents might be wary. Concerns around AI-generated misinformation persist, and the chatbot’s friendly, responsive nature could potentially encourage kids to form habits that prioritize digital interaction over real-life social connections with family and peers.

Built-In AI Safeguards Help Keep Conversations Kid-Friendly

The company told us the AI includes built-in safeguards — it actively detects sensitive or inappropriate topics and redirects kids to talk to a trusted adult instead of continuing the conversation. In our brief testing, we found that PinwheelGPT did indeed refuse to respond to violent or inappropriate queries.

Parents also have complete access to all chatbot interactions, including current and deleted conversations, allowing them to monitor usage and intervene if necessary.

Parents haven’t pushed back, since they can easily disable or remove PinwheelGPT using the parental controls if they’re concerned, said founder Dane Witbeck, a father of four. He also emphasized that the company doesn’t use any personal data from users—children or adults—to train its AI models.

Pinwheel introduced its first kid-safe phone in 2020, and by 2024, it had earned the No. 212 spot on the Inc. 5000 list of fastest-growing U.S. companies.

Expanding into smartwatches is a logical next step, helping Pinwheel compete in the nearly $100 billion smart wearables market alongside giants like Apple and Fitbit. The company aims to carve out a niche by focusing specifically on devices for children.

Unlike competitors like the Fitbit Ace LTE, which centers on location tracking and health monitoring, Pinwheel’s watch stands out by offering a more communication-focused experience for kids.

Packed with Kid-Friendly Communication Tools and Fun Features

Beyond its AI capabilities, the smartwatch allows kids and tweens to make calls and send texts using voice commands or a keyboard. It also includes a camera for selfies and video calls, a voice recording app, and other utilities like an alarm, calendar, calculator, and mini-games—including one similar to Tetris.

Parents manage controls through the “Caregiver” app, where they can create a “Safelist” of approved contacts for their child and block unwanted numbers from being added.

A “Schedule” feature enables parents to customize usage based on time of day—restricting device access during school or camp, for example. They can also set it to allow only emergency contacts during the day and unlock full access later.

Parents can also opt to review their child’s text messages, which is especially helpful for younger users. An AI-powered summary tool provides brief overviews of message threads to keep parents informed.

The Pinwheel Watch is currently available in the U.S., Canada, the U.K., and Australia, with further international expansion on the horizon. It’s also set to launch on Amazon later this summer, although the exact date hasn’t been confirmed yet.

Read the original article on: TechCrunch

Read more: Your Smartwatch Could Detect Illness Early and Aid Pandemic Prevention