Image Credits: Boom Supersonic

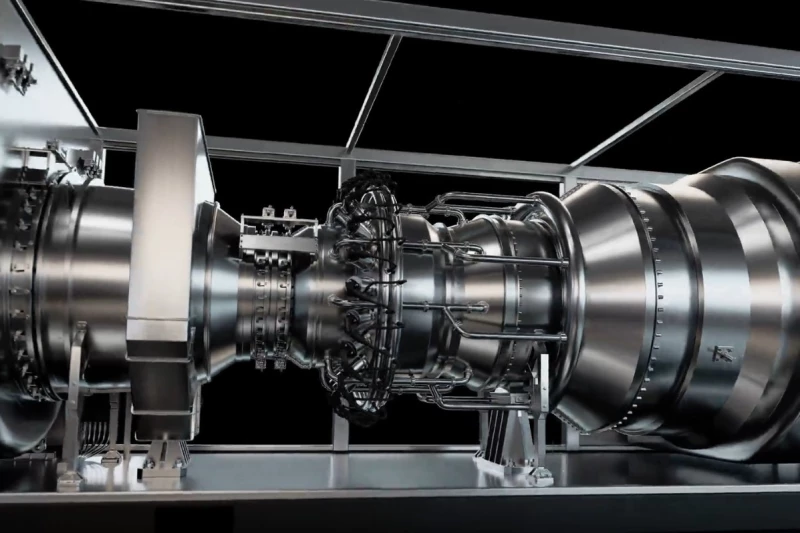

Boom Supersonic says it has repurposed the core technology from its Mach-1-plus Symphony jet engine to serve as a new source of revenue—using it to power energy-intensive AI data centers, bringing together the rising trends of civilian supersonic travel and artificial intelligence.

Supersonic Startup Seeks Additional Revenue as Overture Advances

Boom Supersonic has made significant progress in its effort to bring the Overture supersonic passenger jet into service. The challenge, however, is that building aircraft capable of exceeding the speed of sound is enormously expensive, and investor funding can only go so far. As a result, the company’s backup strategy is to repurpose its aerospace technology to generate some down-to-earth revenue in the meantime.

Conveniently, another cutting-edge sector is hungry for new solutions—this time for sheer power. AI data centers are cropping up everywhere, as abundantly as weeds in an untended yard. But unlike weeds, these facilities demand massive amounts of electricity for both operation and cooling. Data centers are set to at least double their energy use in the coming years and will likely become the largest single electricity consumer in the United States by 2035.

Boom Supersonic

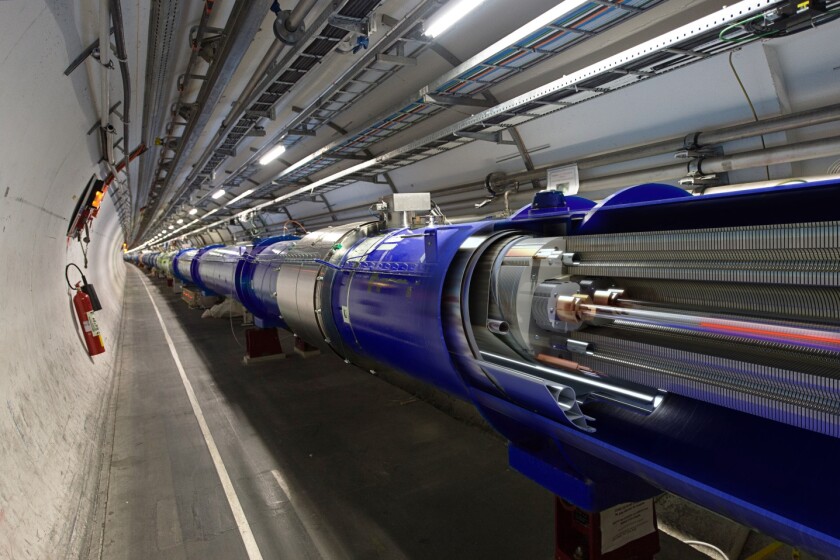

It’s no surprise, then, that numerous tech companies are racing to lock in steady, around-the-clock power supplies—so vital that some are even restarting retired nuclear plants or investing in building new reactors to keep their operations running without interruption.

To help meet this soaring demand for energy, Boom is reengineering its Symphony engine into a natural-gas–powered turbogenerator that can also operate on diesel in a pinch.

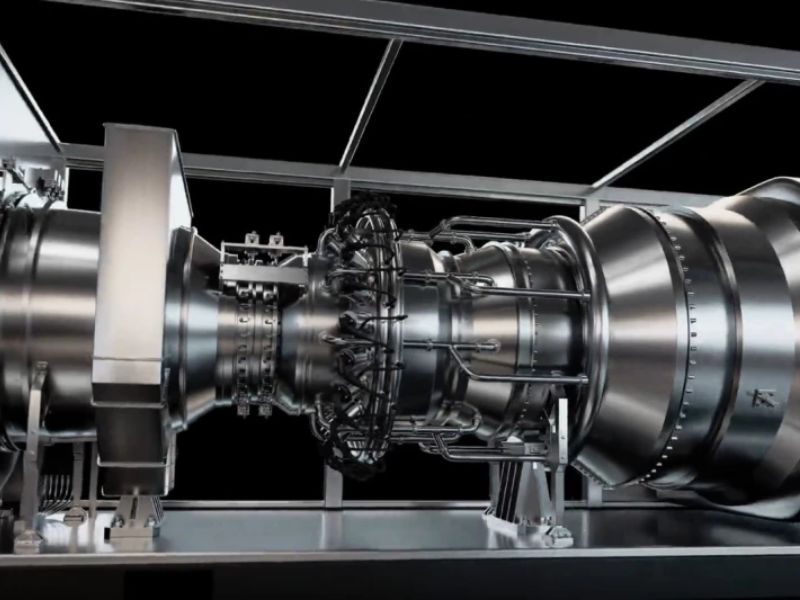

Symphony Engine Reengineered to Generate Electricity

The redesigned system, called Superpower, retains about 80% of Symphony’s original parts. The key change replaces the turbofan used for propulsion with extra compressor stages and adds a free power turbine to generate electricity.

Boom Supersonic

Superpower also requires no external cooling and can function reliably in ambient temperatures up to 110 °F (43 °C). Despite occupying no more space than a standard shipping container, it can produce 42 MW of power, and Boom claims the unit can be installed within about two weeks once its foundation is prepared.

Superpower Orders Boost Boom’s Finances and Supersonic Ambitions

According to the company, AI infrastructure provider Crusoe has already ordered 29 Superpower units, totaling 1.21 GW of capacity. Boom aims to scale up production to deliver 4 GW annually by 2030.

The company believes this new revenue stream will help solidify its plans for a supersonic passenger aircraft.

“Supersonic technology is a catalyst—not just for faster travel, but now for AI as well,” said Blake Scholl, Boom Supersonic’s founder and CEO. “With this funding and our first Superpower order, Boom is financially positioned to deliver both our engine and our airliner.”

Read the original article on: New Atlas

Read more: China Develops The First Robot that Can Run Autonomously Indefinitely