Generative AI and robotics are rapidly driving us toward a future where we can produce an object within minutes just by asking for it. MIT researchers have already taken a major step: they’ve built a speech-to-reality system—an AI-powered workflow that lets users give spoken instructions to a robotic arm, effectively “talking” objects into existence, including items like furniture created in just a few minutes.

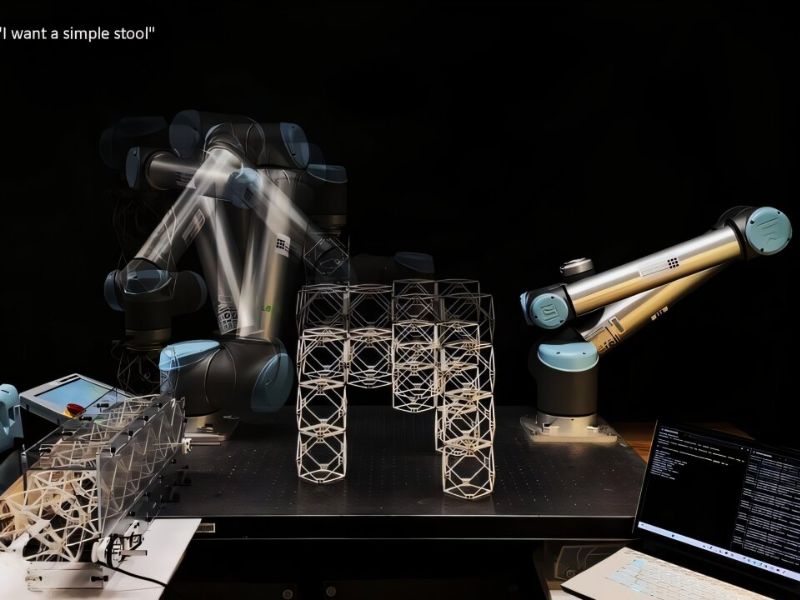

Using the speech-to-reality system, a tabletop robotic arm can take spoken commands—such as “I want a simple stool”—and assemble objects from modular parts. So far, the team has built stools, shelves, chairs, a small table, and even decorative pieces like a dog statue.

“We’re integrating natural language processing, 3D generative AI, and robotic assembly,” says Alexander Htet Kyaw, an MIT graduate student and Morningside Academy for Design fellow. “Until now, no one had combined these fast-moving research areas in a way that lets you create physical objects from a simple voice prompt.”

The concept originated when Kyaw, a student in Architecture and in Electrical Engineering and Computer Science, took Professor Neil Gershenfeld’s course “How to Make Almost Anything.” There, he created the first version of the speech-to-reality system. He later advanced the project at MIT’s Center for Bits and Atoms (CBA), working with graduate students Se Hwan Jeon from Mechanical Engineering and Miana Smith from CBA.

How The Speech-To-Reality System Operates

The speech-to-reality system starts by using speech recognition to capture the user’s request and pass it to a large language model. Next, a 3D generative AI model produces a digital mesh of the desired object, and a voxelization algorithm converts that mesh into modular building components.

Geometric processing then adjusts the AI-generated structure to make it buildable, accounting for factors like part count, overhangs, and connections between pieces. After this, the system generates a valid assembly sequence and calculates robotic arm paths so the robot can construct the object from the user’s instructions.

By relying on natural language, the system lowers the barrier to design and fabrication for people with no background in 3D modeling or robotics. And unlike 3D printing, which can require hours or even days, this approach produces objects in just minutes.

“This project serves as a bridge between humans, AI, and robots to jointly shape our environment,” Kyaw says. “Imagine saying, ‘I want a chair,’ and having a real chair appear before you just five minutes later.”

Enhancements and a Wider Vision

The team plans to enhance the furniture’s load-bearing capacity by replacing the magnetic connections between cubes with stronger, more durable joints.

“We’ve also created workflows to turn voxel-based structures into workable assembly sequences for small, distributed mobile robots, which could enable scaling this approach to objects of any size,” says Smith.

Using modular components allows objects to be reused and repurposed, reducing waste—for example, transforming a sofa into a bed when the sofa is no longer needed.

Drawing on his experience with gesture recognition and augmented reality for robot-assisted fabrication, Kyaw is now working to integrate both speech and gesture controls into the speech-to-reality system.

Inspired by the replicator from Star Trek and the robots in Big Hero 6, Kyaw shares his vision:

“I want to make it easier for people to create physical objects quickly, accessibly, and sustainably,” he says. “I’m aiming for a future where you can truly control matter itself—where reality can be generated on demand.”

The team presented their paper, “Speech to Reality: On-Demand Production using Natural Language, 3D Generative AI, and Discrete Robotic Assembly,” at the ACM Symposium on Computational Fabrication (SCF ’25) held at MIT on November 21.

Read the original article on: Tech Xplore

Read more: China Develops The First Robot that Can Run Autonomously Indefinitely