A novel AI model is showing an exceptional capacity to predict human behavior by analyzing visual and contextual information in real time. Instead of merely responding to motion, it infers what individuals are likely to do next.

Researchers at Texas A&M University’s College of Engineering, in collaboration with the Korea Advanced Institute of Science and Technology, have unveiled a new AI system called OmniPredict, aimed at enhancing safety for self-driving vehicles.

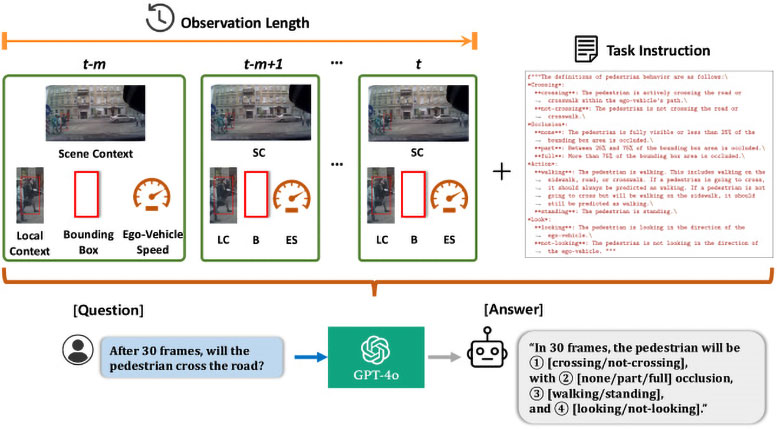

OmniPredict is the first system to leverage a Multimodal Large Language Model (MLLM) to anticipate pedestrian behavior. Using the same core technology as chatbots and image-recognition tools, it combines visual input with context to predict a person’s next move in real time.

Early trials have drawn attention for showing that OmniPredict can achieve impressively high accuracy even without task-specific training.

“Cities are unpredictable. “Pedestrians can be unpredictable,” said Dr. Srinkanth Saripalli, lead researcher and director of the Center for Autonomous Vehicles. “Our model envisions machines that anticipate, not just observe, human behavior.”

A modern take on streetwise intelligence

OmniPredict adds street awareness to autonomous driving, mimicking human intuition.

Rather than simply reacting to a pedestrian’s present actions, it seeks to predict their next move. If successful, this method could reshape how self-driving vehicles handle crowded urban environments and move more seamlessly through busy streets.

“It paves the way for safer self-driving vehicle operations, reduces pedestrian-related accidents, and moves from reactive responses to proactively preventing hazards,” Saripalli explained.

The psychological experience could change as well.

Picture standing at a crosswalk and, instead of making eye contact with a human driver, knowing that an AI is monitoring your position and anticipating your next move.

“Fewer tense standoffs, fewer close calls, and smoother traffic flow. That’s all possible because vehicles can interpret not just movement, but—most importantly—intent,” Saripalli added.

Reading People in Motion

OmniPredict’s impact goes well beyond busy city streets, crowded intersections, or hectic crosswalks.

“We’re unlocking opportunities for innovative applications,” Saripalli said. A system that detects and predicts threatening behavior could have significant real-world applications.

More generally, an AI that interprets posture shifts, hesitation, body orientation, or stress indicators could revolutionize how military and emergency personnel operate in critical situations.

“It could identify early warning signs of risk and offer an additional layer of situational awareness,” Saripalli explained.

In such situations, this approach could enable personnel to quickly understand complex environments and make faster, better-informed decisions.

“Our aim isn’t to replace humans,” Saripalli added, “but to support them with a more intelligent partner.”

Testing in the Field

Conventional self-driving systems depend on computer-vision models trained on vast collections of images and datasets. While effective, these models often have difficulty adjusting to dynamic situations.

“Shifting weather, unpredictable human behavior, unusual events, and the inherent chaos of city streets can challenge even the most advanced vision-based systems,” Saripalli explained.

The result is an AI that not only observes a scene but also interprets it, anticipating the movements of each element and adjusting in real time.

The team evaluated OmniPredict using two of the most challenging pedestrian behavior benchmarks—JAAD and WiDEVIEW—without providing any prior specialized training.

Published in Computers & Engineering, the study found that OmniPredict achieved an impressive 67% accuracy, outperforming the latest models by 10%.

It sustained strong performance even when contextual factors were added, such as partially obscured pedestrians or individuals looking toward a vehicle.

The AI also demonstrated faster reaction times, better generalization across different road conditions, and more robust decision-making than conventional systems—promising indicators for real-world use.

“OmniPredict’s results are exciting, and its adaptability points to even broader potential applications,” Saripalli said.

The Next Turn in Autonomous Anticipation

Although still a research prototype rather than a production-ready system, OmniPredict hints at a future in which autonomous vehicles depend less on sheer visual data and more on understanding human behavior.

By merging reasoning with perception, the system creates a new form of shared intelligence—making the world not just automated, but far more intuitive.

“OmniPredict doesn’t merely observe our actions; it grasps the reasons behind them and can anticipate what we’re likely to do next,” Saripalli explained.

Read the original article on: SciTechDaily

Read more: AI-Enabled Robotic Hands Become More Dexterous by Imitating Human Hands