Researchers from the Istituto Italiano di Tecnologia (IIT) in Genoa and Brown University in the U.S. found that people perceive a humanoid robot’s hand as part of their own body schema, especially during collaborative tasks like slicing a bar of soap.

The study, published in iScience, could lead to improved design of robots intended to work closely with humans, such as those used in rehabilitation settings.

Led by Alessandra Sciutti, Principal Investigator of the CONTACT unit at IIT, in collaboration with Brown University professor Joo-Hyun Song, the project investigated whether the unconscious processes that guide human-to-human interaction also apply when a person interacts with a humanoid robot.

Exploring the Near-Hand Effect and the Brain’s Adaptable Body Schema

The researchers examined a phenomenon called the “near-hand effect,” where having a hand close to an object shifts a person’s visual attention, as the brain prepares to interact with the object. The study also looked at how the human brain forms a “body schema”—an internal map that helps us move efficiently by incorporating useful external objects into our sense of self.

This body schema, shaped unconsciously by external stimuli, allows us to navigate spaces, avoid obstacles, or reach for objects without needing to look directly at them. Tools, like a tennis racket, can become part of this mental map if they assist with a task—feeling like an extension of the body with repeated use. Because the body schema is flexible and constantly adapting, Sciutti’s team investigated whether they could also integrate a robot into it.

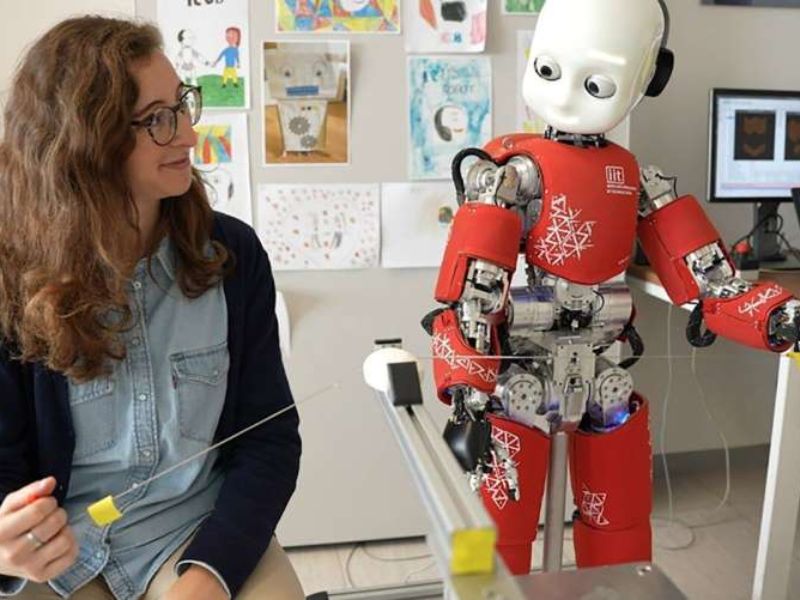

Giulia Scorza Azzarà, a Ph.D. student at IIT and the study’s lead author, designed and analyzed experiments in which participants performed a shared task with iCub, IIT’s child-sized humanoid robot. Together, they sliced a bar of soap using a steel wire, taking turns pulling it—one time by the human, the next by the robot.

Testing Robotic Hand Integration Using the Posner Cueing Task

To assess whether the robotic hand had been integrated into the participants’ body schema, researchers used the Posner cueing task. In this test, participants were asked to press a key as quickly as possible when an image appeared on one side of a screen, with nearby objects subtly influencing their attention.

Results from 30 participants revealed a clear pattern: individuals responded faster when images appeared next to the robot’s hand, suggesting their brains perceived it as if it were their own. Control experiments confirmed that this effect occurred only among those who had completed the soap-cutting task with the robot.

The intensity of the near-hand effect was influenced by how the humanoid robot moved. When iCub’s movements were wide, smooth, and well-coordinated with the participant’s actions, the effect was stronger—leading to a greater integration of the robot’s hand into the person’s body schema. Physical proximity also mattered: the closer the robot’s hand was to the participant during the soap-slicing task, the more pronounced the effect.

Participant Perception and Empathy Strengthen Robot Integration

To understand how participants perceived the robot after the shared activity, researchers used questionnaires. Results showed that the more competent and likable participants found iCub, the stronger the cognitive effect. When participants attributed human-like traits or emotions to the robot, the sense of integration deepened—suggesting that empathy and a sense of partnership strengthened the mental connection with the robotic hand.

The research team conducted controlled experiments with a humanoid robot, laying the groundwork for a better understanding of how humans and machines interact. Psychological factors will play a key role in designing robots that can respond to human cues and offer a more natural, efficient user experience. These capabilities are especially important for applying robotics in fields like motor rehabilitation, virtual reality, and assistive technologies.

Read the original article on: Tech Xplore

Read more: 5 Takeaways From Munich’s Auto Show — Robots to Flying cars