At MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), a soft robotic hand slowly curls its fingers around a small object. What’s remarkable isn’t its mechanical structure or any built-in sensors—because it has none. Instead, the system depends entirely on a single camera that observes the robot’s actions and uses that visual input to guide its movements.

This ability comes from a new system created by CSAIL researchers that offers a fresh take on robot control. Instead of relying on manually crafted models or complex arrays of sensors, the system lets robots learn how their bodies respond to commands using only visual input. Known as “Neural Jacobian Fields (NJF),” the method provides robots with a form of self-awareness about their physical form.

“We’re moving away from programming robots step by step to teaching them,” says Sizhe Lester Li, a PhD student at MIT CSAIL and lead author of the study. “Right now, many robotic tasks require heavy engineering and coding. Our hope is that in the future, we’ll simply show a robot a task and let it figure out how to do it on its own.”

Shifting the Focus from Hardware to Control Through Self-Learned Models

The team realized that the main barrier to low-cost, adaptable robotics isn’t hardware but control. Traditionally, robots are rigid and sensor-heavy to enable accurate digital twins for guiding movement. But those assumptions break down when dealing with soft, flexible, or irregularly shaped robots. Instead of fitting machines to models, NJF lets them learn from their own observations.

Separating the modeling process from hardware design could greatly broaden the possibilities for robotics innovation. In soft robots, engineers often add sensors or reinforcements just to simplify modeling and control. NJF removes that limitation. Without built-in sensors or design tweaks, the system enables greater freedom to explore unconventional robot shapes without control concerns.

“Think about how you learn to move your fingers—you try different motions, watch what happens, and adjust,” explains Li. “That’s exactly how our system works. It tests random actions and learns how each one affects the robot’s body.”

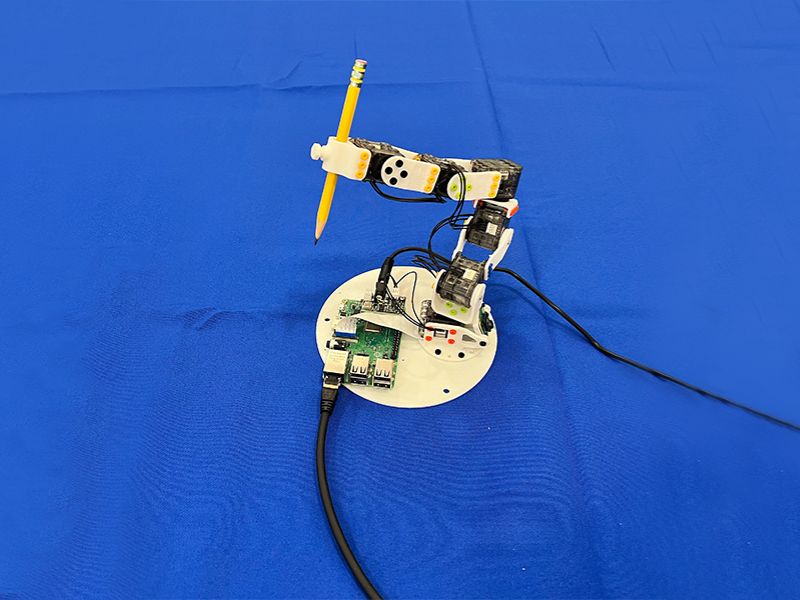

The NJF method has shown strong results across a variety of robotic systems. They tested it on a soft hand, a rigid Allegro hand, a 3D-printed arm, and a sensorless rotating platform. In every case, the system learned the robot’s structure and response using only vision and trial and error.

Expanding NJF’s Potential

Looking ahead, the researchers believe NJF has applications well beyond the lab. Robots using this system could handle precise farm work, operate on construction sites, or navigate unpredictable environments without complex sensors.

NJF uses a neural network to learn a robot’s 3D shape and response to control signals. The system builds on NeRF, which reconstructs 3D scenes by mapping coordinates to color and density. NJF extends this by learning a Jacobian field, which predicts how points on the robot move in response to commands.

To train the system, the robot performs random actions while multiple cameras capture its movements. The model needs no manual input or prior knowledge—it learns control effects through observation.

Real-Time Control with a Single Camera

After training, the robot only requires a single monocular camera for real-time, closed-loop control at roughly 12 Hertz. This enables the robot to observe itself continuously, make plans, and respond in real time. This makes NJF more practical than many slower, simulation-based methods for soft robotics.

In early tests, even basic 2D fingers and sliders managed to learn the mapping with only a handful of examples. By modeling how certain points move or deform in response to actions, NJF constructs a detailed map of controllability. This internal model lets the system generalize movements, even with noisy or incomplete data.

“What’s fascinating is that the system autonomously learns which motors influence which parts of the robot,” explains Li. “It’s not pre-programmed—this understanding emerges through learning, similar to how a person figures out the controls on a new device.”

The Future Is Flexible

For many years, robotics focused on rigid, easily predictable machines—like the industrial robot arms used in factories—because their simplicity made them easier to control. However, the field is increasingly shifting toward soft, bio-inspired robots that can interact with the real world more naturally. The challenge? These robots are much more difficult to model.

“Today’s robotics often seems inaccessible due to expensive sensors and complicated programming,” says Vincent Sitzmann, senior author and MIT assistant professor who leads the Scene Representation group. “With Neural Jacobian Fields, our aim is to reduce those barriers and make robotics more affordable, flexible, and widely accessible. Vision serves as a robust, dependable sensor that enables robots to function in chaotic, unpredictable settings—from farms to construction sites—without relying on costly infrastructure.”

“Visual input alone enables control and localization without GPS or complex sensors,” says co-author Daniela Rus. This enables adaptive behavior in dynamic settings, from drones without maps to robots in cluttered or rough environments. Learning from visual feedback, the system builds internal models for flexible, self-supervised control where traditional methods fall short.

Toward a More Accessible, Phone-Based Training Method for Robots

Currently, training NJF requires multiple cameras and must be customized for each individual robot. However, the researchers are already envisioning a more user-friendly version. In the future, hobbyists might simply record a robot’s spontaneous movements using a smartphone—much like documenting a rental car before driving—and use that video to generate a control model, without needing any technical expertise or specialized tools.

The system can’t yet transfer between robots and lacks touch sensing, limiting its use in contact-heavy tasks. The team aims to overcome these limits by enhancing generalization, handling occlusions, and broadening spatial-temporal reasoning.

“NJF gives robots a kind of embodied self-awareness—an intuitive sense of how their bodies move and respond—using only visual input,” says Li. It’s similar to how humans naturally learn to control their limbs. This kind of understanding is key for enabling robots to perform flexible manipulation in complex environments. Ultimately, this work marks a shift from hand-coded models to learning through observation and interaction.

A Collaborative Effort Bridging Vision, Learning, and Soft Robotics

The research combines expertise in computer vision and self-supervised learning from lead PI Vincent Sitzmann’s lab with advancements in soft robotics from the lab of MIT CSAIL Director and EECS Professor Daniela Rus. Co-authors of the paper include Sitzmann, Rus, and Li, along with CSAIL PhD students Annan Zhang SM ’22 and Boyuan Chen, undergraduate researcher Hanna Matusik, and postdoctoral fellow Chao Liu.

The study received funding from the Solomon Buchsbaum Research Fund via MIT’s Research Support Committee, an MIT Presidential Fellowship, the National Science Foundation, and the Gwangju Institute of Science and Technology. The team’s findings were published in Nature this month.

Read the original article on: MIT Csail

Read more: Catonator: Revolutionizing Concrete Cutting with Robotic Precision