As we grow up, we learn to apply just the right amount of force to move objects and to avoid touching things like a hot pan with bare hands. Now, engineers have created a robotic hand that can do the same.

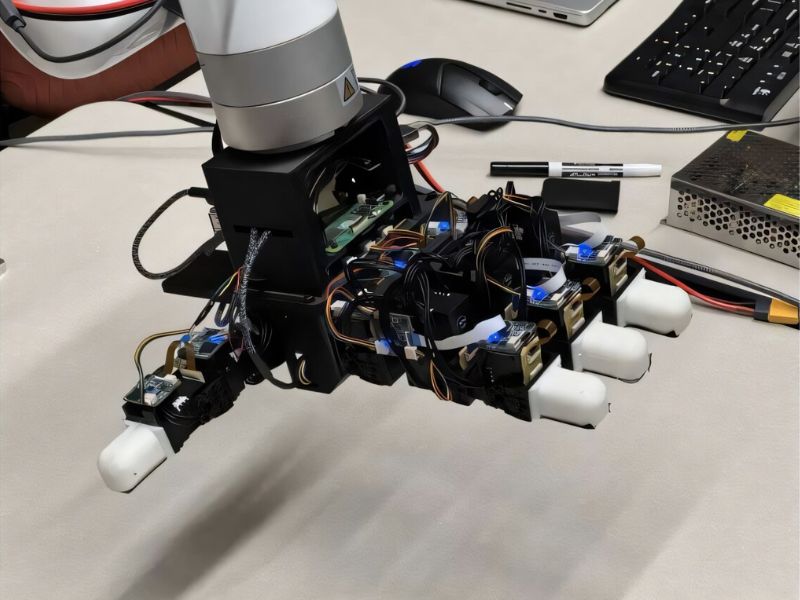

A student team partnered with USC Viterbi computer science assistant professor Daniel Seita to create the MOTIF Hand, designing it to be multimodal—equipped with multiple sensory functions. It features sensors for depth, force, and temperature, enabling it to detect and respond to changes in these areas.

These advanced sensing abilities not only improve research possibilities in robotics but also help extend the hand’s durability by preventing heat-related damage. Additionally, its force sensitivity offers practical potential for real-world applications.

“In settings like factories, robots need to apply force to move objects into place, which means they have to measure how much force they’re using,” Seita explained. “A force sensor like this can be really useful to ensure the robot is applying the correct amount of pressure.”

“We haven’t seen anyone build a hand quite like this before,” he added.

Heated Innovation

The MOTIF Hand is an evolution of the LEAP Hand, developed by a Carnegie Mellon research team in 2023. Its main breakthrough lies in incorporating human-like sensory abilities. According to Seita, the MOTIF Hand’s enhanced, lifelike features and precision could open the door to a wide range of uses—from factory tasks to cooking and welding.

The robot’s temperature-sensing ability comes from a thermal camera embedded in its palm. Seita and his team of USC Viterbi graduate students set out to design a hand that mimics human awareness of temperature.

“When we’re cooking, we often hold our hand near a hot pot to gauge its heat before touching it, helping us avoid burns or injury,” Seita explained. “We wanted to give robots that same kind of instinct.”

Hanyang Zhou, a co-author of the research paper “The MOTIF Hand: A Robotic Hand for Multimodal Observations with Thermal, Inertial, and Force Sensors” and recent Viterbi School computer science master’s graduate, explained that the system detects temperature intuitively by positioning the hand close to the object.

“We wondered if there was a way to pick up a signal without needing to make contact,” Zhou said. “That’s why we placed an infrared camera directly in the palm.” The paper is currently available on the arXiv preprint server.

In other words, the MOTIF Hand can sense temperature using its thermal camera without making contact—simply bringing the hand near an object allows the camera to detect its heat.

“You Need to Experience it Firsthand”

Seita, Zhou, and their team aimed to make sensing temperature and force feel more natural—mirroring how humans experience these sensations. For instance, force is invisible to the eye and only understood through touch. The MOTIF Hand is designed to replicate this tactile understanding, enabling more realistic robotic responses to force, such as gauging an object’s weight.

“We, as humans, can’t see force; we have to feel it. But how can a robot hand do the same?” Zhou wondered. “If I’m unsure whether a water bottle is full, I just flick or shake it to find out.”

The IMU sensors integrated into the MOTIF Hand enable it to perform this basic test, allowing the robotic hand to flick or shake an object to assess its weight, much like a human would.

Building on Carnegie Mellon’s open-source LEAP Hand, Seita and his team plan to make the MOTIF Hand open-source as well to further develop this sensory technology.

“Making research openly available is crucial for progress in the field,” Seita said. “The more people who use our hand, the more it benefits the research community.”

Zhou referred to the sensory improvements of the MOTIF Hand as a “platform” that he hopes will serve as a foundation for the broader robotics community to develop further.

“We want to make it simple and accessible for as many research teams as possible, provided they’re interested in this kind of platform,” Zhou said.

Read the original article on: Tech Xplore

Read more: Humans See a Collaborating Robot as Part of Themselves